VLA · World Models · Robotic Systems

Building the intelligence layer for physical robots.

I lead work on vision-language-action systems, world-state modeling, and production autonomy stacks that connect perception, planning, prediction, and action.

2026 - Present

Building VLA and world model systems for embodied robots.

01 · XLab · Embodied Intelligence

At XLab, my work focuses on embodied intelligence: using VLA policies, world-state modeling, multimodal perception, and closed-loop action generation to build robot systems that can understand, predict, and act in physical environments.

XLab embodied intelligence media reference.

2024 - 2026

VLA / XPlanner for robotic decision and action.

02 · Robot Policy

At XPeng Motors, I work on vehicle-side VLA/XPlanner systems: route-video-to-trajectory modeling, large-model scaling, dynamic interaction, and complex-scenario action generation. I think of this as robot policy learning under real product constraints.

2021 - 2024

Model the world before taking action.

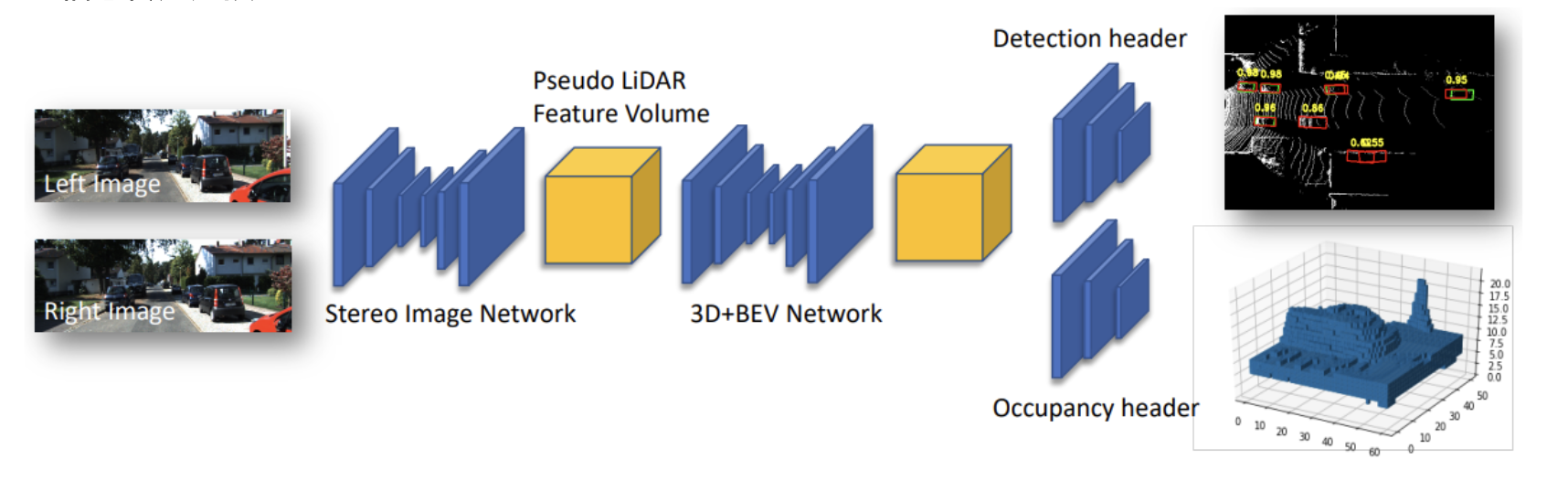

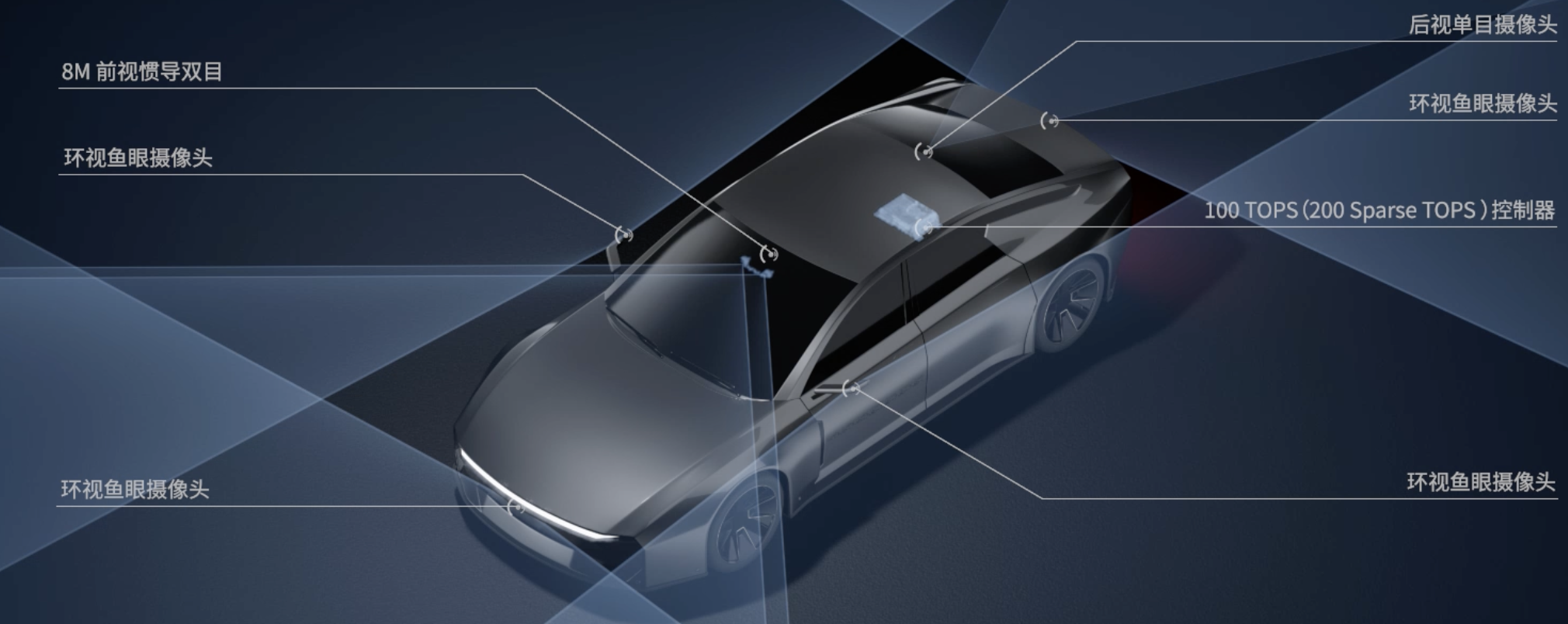

03 · World Models

My prior work at DJI Automotive focused on BEV perception, dynamic object detection, tracking fusion, occupancy-style scene understanding, and 4D annotation loops. These are the ingredients for world-state modeling in deployed robot systems.

2018 - 2021

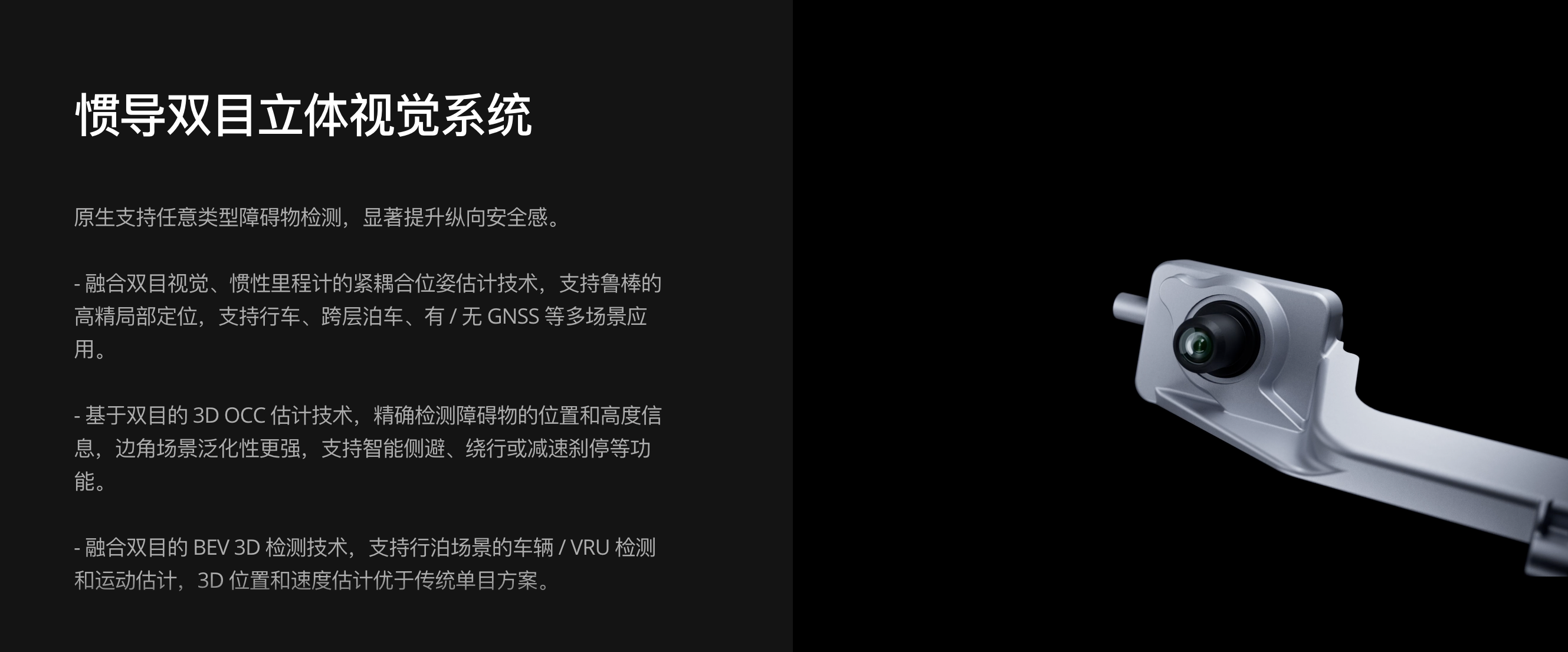

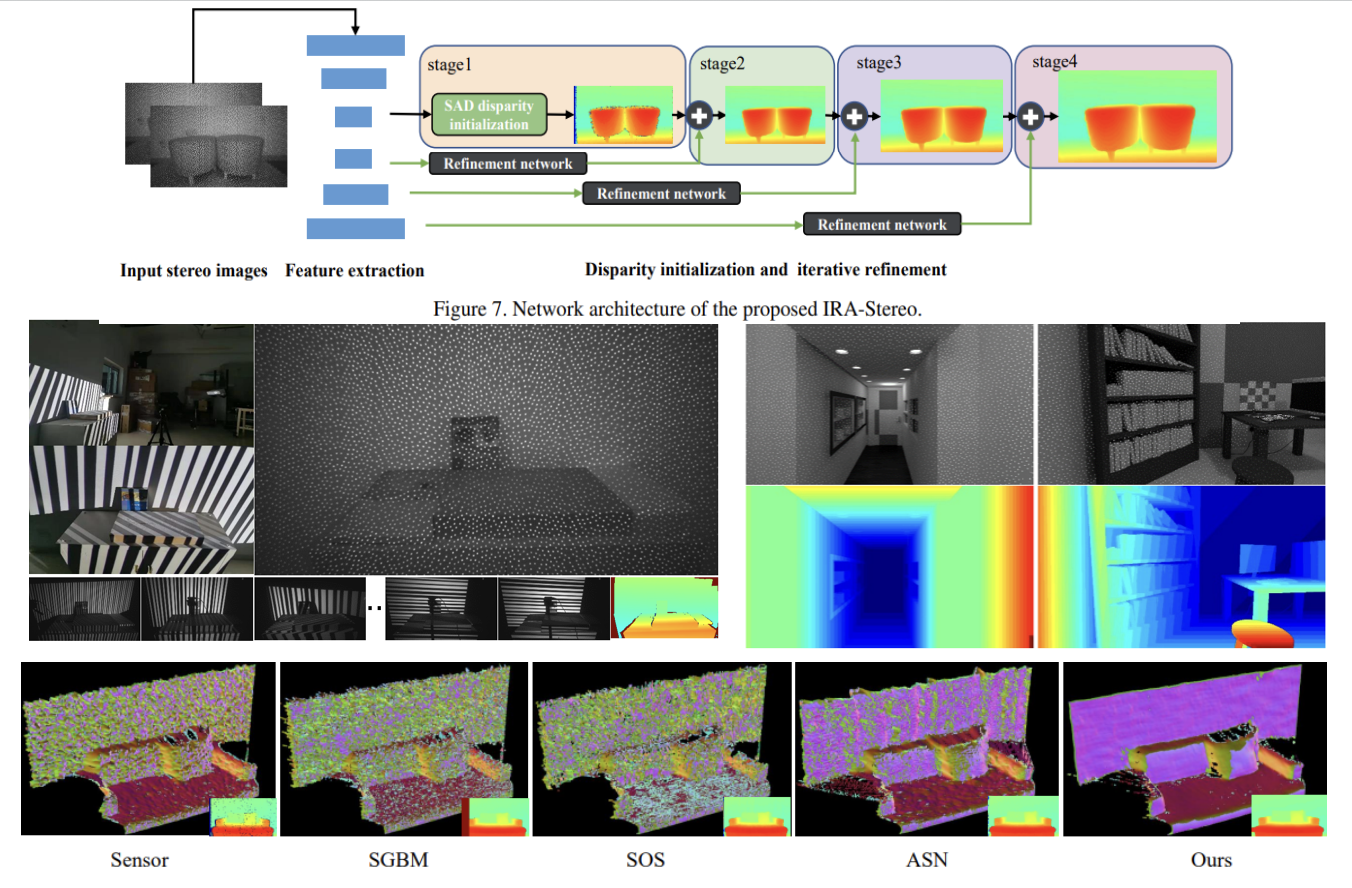

3D vision as the sensorimotor substrate.

04 · Perception Foundation

I built and maintained practical stereo and depth systems, including X-StereoLab with 600+ stars and 100+ forks. Stereo matching, active stereo, RGB-D understanding, and road-structure perception form the lower-level grounding for robot intelligence.

Mission

Build general, reliable physical AI robot systems.

My goal is to build robot intelligence that can generalize across physical environments, deploy at real-world scale, and continuously improve through closed-loop data, world models, and self-evolving iteration.

Visitors